Meta has announced a host of new AI-powered bots, features and products that will be released across its messaging apps, Meta Quest 3 and future Ray-Ban Meta smart glasses. New features — from AI Assistant to photo editing — harness the power of generative AI to make Meta even more addictive.

Although new AI experiences and features, as Meta puts it, “give you the tools to be more creative, expressive, and productive.”

A slew of AI-focused announcements came as part of Meta’s annual Connect conference, where the company announced its latest mixed reality headset and plans to release smart glasses in partnership with Ray-Ban.

All AI technologies are based on Llama 2, the new Meta family of open access AI models released in July. The large language model is designed to generate text and code in response to prompts, and is trained on a mix of publicly available data, according to Meta. The company indicated that Llama 3 will be released in 2024.

Meta also announced at Connect its new image generator Emu, which it will use to power things like AI stickers and image editing.

Let’s dive into all the new ways Meta is using AI.

AI Assistant, powered by Bing

Meta’s AI Assistant is powered by Llama 2 LLM. Presented by: Ahmed Al Dahla, Vice President of GenAI. Image credits: dead

Meta’s AI Assistant is designed to provide you with real-time information and create photorealistic images from text prompts in just seconds. It can help plan a trip with friends in a group chat, answer general knowledge questions and search the Internet via Microsoft’s Bing to provide real-time web results.

With Llama 2, Meta said it created specialized datasets grounded in natural conversation so that AI systems would respond in a conversational, friendly tone.

“With Meta AI, we saw an opportunity to leverage this capability and create an assistant that can do more than just write poems,” Ahmed El Dehli, vice president of GenAI at Meta, said on Wednesday. “Behind Meta AI, we built an orchestrator. It can seamlessly detect user intent through the router and direct them to the right extension.

The first extension will be web search, powered by Bing, to help with queries that require real-time information.

“Whether you want to know the history of Eggs Benedict, how to make it, or even where to get it in San Francisco, Meta AI can help ensure you have access to the latest information through the power of search,” Aldahl said.

The AI assistant will be available in beta in the US on WhatsApp, Messenger and Instagram, and will soon be available on Ray-Ban Meta smart glasses and the Quest 3 VR headset.

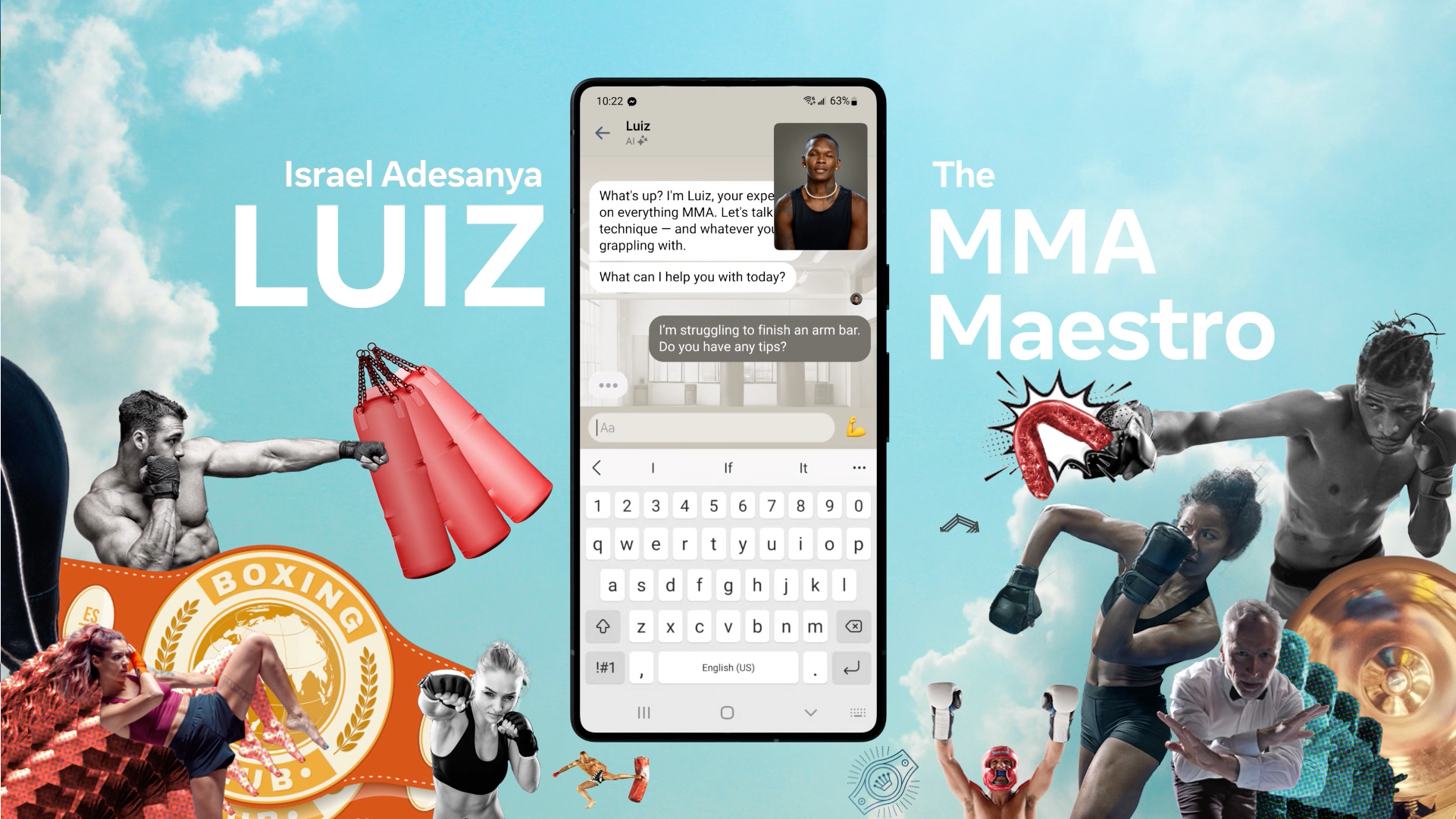

Personalized AI chatbots based on celebrities

Each AI character is based on a real-life celebrity or influencer. Image credits: dead

The Meta has dropped 28 AI characters based on famous personalities, but built entirely from AI, from across the worlds of sports, music, social media, and more. You can think of them as topic-specific chatbots that you can message on WhatsApp, Messenger, and Instagram. Each character is based on a celebrity or influencer. For example:

- The likeness of football star Tom Brady is used for a character named “Bru” who can talk to you about sports.

- Tennis player Naomi Osaka appeared as “Tamika” to talk about all things manga.

- YouTube personality Mr. Beast plays “Zach” to be… a funny guy?

- MMA fighter Israel Adesanya appears as Louise to talk about MMA.

- Model Kendall Jenner appears as Billie to play the older sister.

Meta’s AI systems are built on Llama 2 LLM. Most of their knowledge bases are limited to information that largely existed before 2023, but Meta says it hopes to bring its Bing search functionality to its AI systems in the coming months.

Not only will the characters respond via text, but they will also be able to talk next year. But at the moment there is no sound. Any video element you see today and in the future is based on animations created by artificial intelligence. Meta photographed people representing various AI systems and then used “generative techniques” to turn those disparate animations into a cohesive experience.

Meta did not explain to TechCrunch how it compensated celebrities for the use of their images.

AI StuDuet for companies and creatives

Meta’s AI Studio is available to businesses and creatives. Starring: Angela Fan, research scientist. Image credits: dead

Meta’s AI Studio platform will allow companies to build AI-powered chatbots for the company’s various messaging services, including Facebook, Instagram and Messenger.

Starting with Messenger, AI Studio will allow companies to “build AI systems that reflect their brand values and improve customer service experiences,” Mita wrote in an article. mail. On stage, Meta CEO Mark Zuckerberg explained that the use cases Meta envisions are primarily e-commerce and customer support.

AI Studio will be available in alpha to start, and Meta says it will expand the toolset further starting next year.

In the future, creators will also be able to leverage AI Studio to build AI that “extends their virtual presence” via Meta Apps. Meta pointed out that these matters should be punished and directly controlled by the creator.

Next year, Meta will be building a sandbox so anyone can try their hand at creating their own AI, something Meta will bring to the Metaverse.

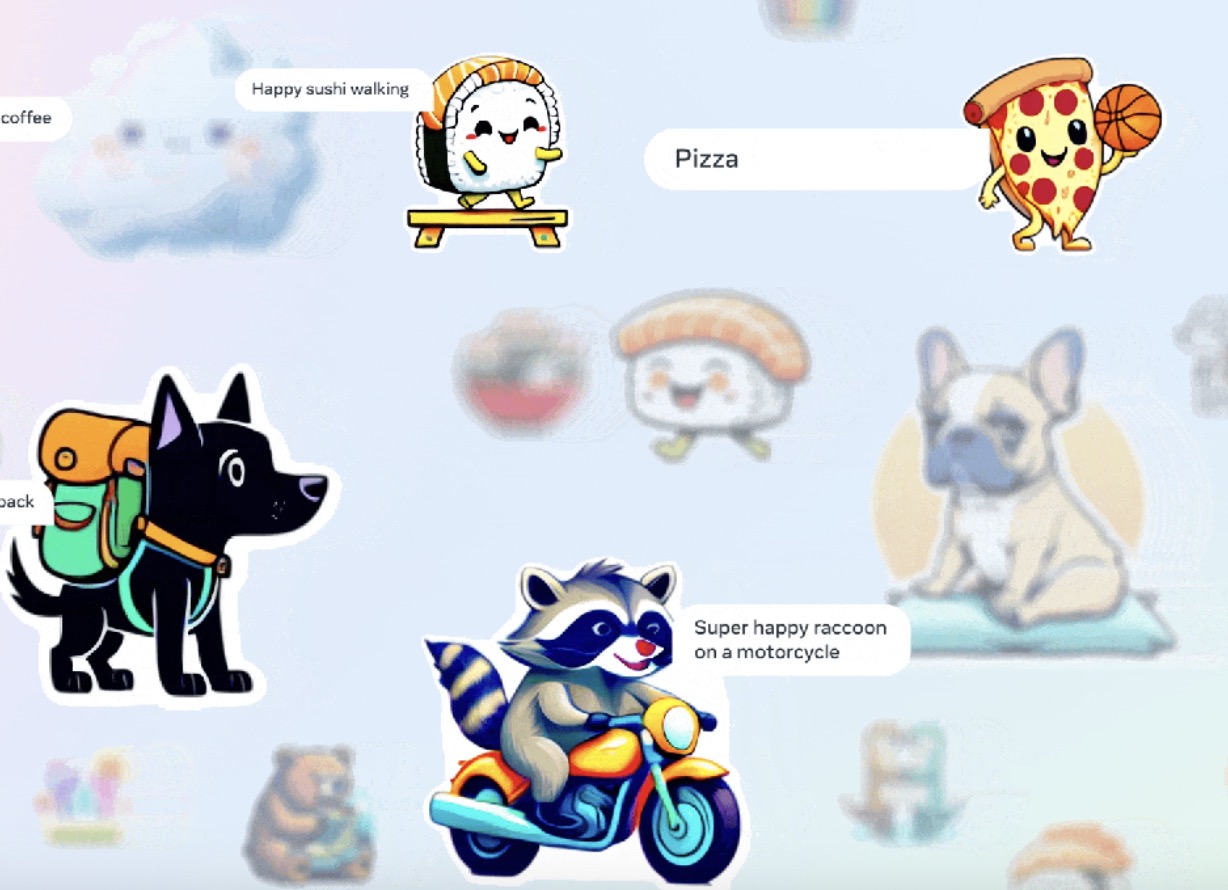

Artificial intelligence stickers, powered by Emu

Image credits: dead

CEO Mark Zuckerberg announced that generative AI stickers will be coming to Meta’s messaging apps. This feature, supported by its new basic image generation model, Economic and Monetary Unionwill allow users to create unique AI stickers within seconds across Meta apps including WhatsApp, Messenger, Instagram and even Facebook Stories.

“Every day people send hundreds of millions of stickers to express things in conversations,” Zuckerberg said. “And every conversation is a little different and you want to express different emotions subtly. But today we only have a fixed number – but with Emu now you have the ability to write whatever you want.

To use stickers, you can type in a text box with exactly the type of images you want to see. This feature was demonstrated in the WhatsApp application, where Zuckerberg presented crazy ideas such as “a Hungarian sheep dog driving an SUV,” for example. Meta says it takes three seconds on average to generate multiple sharing options instantly.

The company says that the feature will initially be available to English language users and will begin rolling out next month.

AI Photo Editing – Redesign photos and add background

Image credits: dead

Meta says you’ll soon be able to transform your photos or co-create AI-generated images with friends. These two new features – the redesign and the wallpaper – will soon be available on Instagram in the US, also powered by Emu technology.

Restyle lets you reimagine a photo’s visual styles — Zuckerberg made experimental edits to a photo of his dog, Beast, using AI to turn them into origami and cross-hatched patterns — by typing prompts like “watercolor” or even more. “A poster from magazines and newspapers, edges torn,” Mita explained in detail. Blog post.

Meanwhile, Background changes the scene or background of your photo using prompts.

Meta says the AI visuals will indicate the use of AI “to reduce the chances of people confusing them with human-generated content.” The company said it is also experimenting with forms of visible and invisible marking.

A word about safety

“If you’ve spent any time playing with conversational AI, as you probably know, they have the ability to say things that are inaccurate or even inappropriate. And that can happen to us, too,” Aldahl said.

On stage, Al-Dahla described “thousands of hours” of red-teaming and working with prompts to train the AI assistant and characters to steer clear of questionable topics. Red teaming is an iterative process where you try to get the model to say harmful things, apply fixes, and repeat. continuously.

Meta says it’s also releasing system cards alongside its AI so people can understand “what’s in it and how it’s built.”